Shipping an AI agent is easy; keeping it trustworthy in production is not. Point-in-time audits fall short as failures emerge and attacks evolve. Teams must assess agent behavior across risks before deployment—and learn from production.

This session introduces the Agent Trust Lifecycle: a framework spanning testing for reliability, safety, and security; runtime guardrails; and production observability, with continuous feedback into better agents.

Through real-world examples, we’ll cover advanced testing, effective guardrails, agent-specific observability, and using production signals to improve without regressions. Attendees leave with a practical model for building and hardening agents.

Speaker

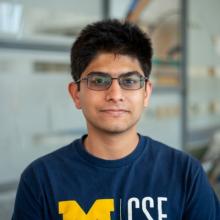

Anuj Tambwekar

Founding Machine Learning Engineer @Vijil.ai

Engineer at vijil.ai, working on making AI agents more trustworthy with primary interests in ML safety, and its applications in safety-critical systems

Session Sponsored By

Vijil bridges the agentic lifecycle trust gap, helping to build, deploy and improve resilient agents.